SciPy is partnering with JOSS! Part 1

The Python in Science Conference (SciPy) compiles a conference proceedings every year (as one does). Our process is a little bit different from most conferences in that our review process occurs in two stages.1

In stage one, the Program Committee and their area chairs solicit abstracts for talks and posters. These abstracts are reviewed for scientific merit by a group of volunteers, where each volunteer may be asked to review somewhere between 6 and 12 different abstracts.

In stage two, the Proceedings Committee invites authors of talks or posters approved by the Program Committee to submit a full paper for inclusion into the conference proceedings (this is optional for the authors). The full papers are reviewed for clarity and reproducibility, and each volunteer is asked to review one paper at most.

This second stage -- the full paper review -- is a large effort.

SciPy owns its own publication software (this is open source, permissively licensed, and available at github.com/scipy-conference/scipy_proceedings). This requires constant maintenance to keep up with changing versions of dependencies both in Python and in LaTeX, and we have a long backlog of feature requests around important areas like improved support for non-Latin characters.

SciPy also hosts a web service which compiles submitted papers for authors to view in real time (this is also open source, permissively licensed, and available at github.com/scipy-conference/procbuild), which not only requires maintenance and improvement, but also money to rent server space2 to run it for the four-ish months leading up to, and slightly after, the conference in July.

The Proceedings Committee chairs start work on these first two items in January of each year. We have about five months to get everything in a good place before invited authors start their paper submissions. At this point, the committee helps authors troubleshoot issues with git, issues with docutils, and issues with LaTeX. At the same time, we are emailing potential reviewers about papers, and then emailing reminders to potential reviewers about papers, and then emailing a second batch of potential reviewers about papers, etc.

In a typical year, somewhere between 50% and 75% of people who have volunteered to review for the conference either reply to decline to review a paper, or never respond to any one of our emails. The Proceedings Committee endeavors to have two reviewers for every full paper which is submitted, which in the past has meant that the committee chairs themselves have stepped in to review somewhere between two and six papers each. During the pandemic, we have relaxed this expectation, for obvious reasons, and field at least one reviewer per paper.

After reviews are over, SciPy publishes the conference-ready version of the proceedings. After the conference is over, SciPy publishes the proceedings again -- this time, as a finalized version that includes slide decks and posters from the conference, which also need to be collected and processed.3 The Proceedings Committee officially ends its work for the year in August.

This is quite a time commitment for the conference organizers (8 months of the year -- maybe 100-160 hours of work?), and represents a pretty large barrier to entry for members of the community who would be interested in volunteering to help run this part of the conference.

This is all to say that the SciPy Proceedings Committee has been very interested in:

- modernizing our publication infrastructure; and,

- automating some of the more time-consuming parts of paper reviews.

We are partnering with JOSS, the Journal of Open Source Software over the next two years, to incorporate some of their publication tools into our own workflows. This kind of partnership has already been completed to great effect by rOpenSci. The review process at JOSS and SciPy differ in a few substantial ways, so we are focusing on two areas in particular:

- automated review management; and,

- more robust paper compilation and publishing.

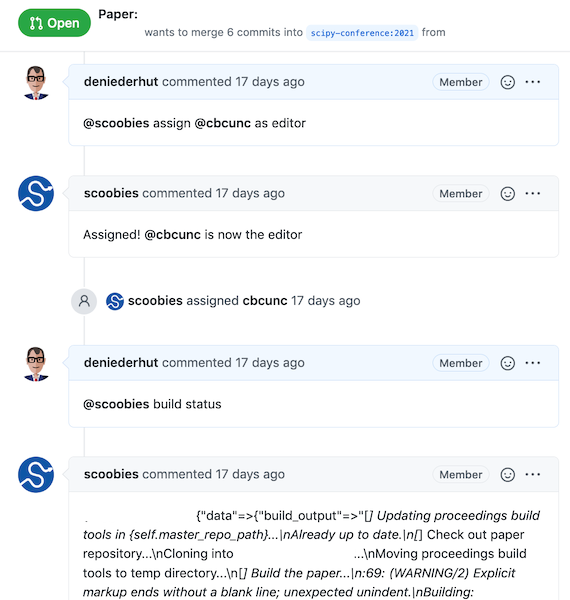

We started in 2021 on the first point -- reviewer management. Like JOSS, SciPy reviews are conducted in the open on GitHub, so adopting their review management bot (called Buffy) seemed like a good first step for us (we named ours Scoobies). Review management at JOSS follows a chatops workflow, where a service performs repeatable and time-consuming steps on demand by interacting with users in a chat-based application. You can read more about chatops on Arfon's blog.

Scoobies keeps track of which committee member is assigned as editor for each paper, and who the selected reviewers are. The bot can also trigger remote paper compilations, and report back about anything that went wrong. Here is what that looks like in the real world:

Scoobies can also perform automated checks of reference sections, and manage the tags we assign to papers to keep track of their progress through the review process.

The second step will involve incorporating pandoc into our publication process. This will be a much larger lift, since we do some custom style injections and extra work to manage our own table of contents in the combined proceedings, but will allow us to support a wider variety of document formats, like markdown papers and possibly also Jupyter notebooks. We will be starting on this work in 2022.

I'd like to close here with some acknowledgments:

- Thanks to Meghann Agarwal at SciPy, for fostering this partnership

- Thanks for Arfon Smith, editor-in-chief at JOSS, for funding for this work, and for helping us think through our approach

- Thanks to Juanjo Bazán, also at JOSS, for all of his Ruby expertise, his willingness to help, but especially his patience with my silly questions about Buffy

-

There is a third stage, where presenters can submit their slide decks or posters for publication (e.g. as PDFs or PowerPoint decks) but these are not reviewed for content ↩

-

Currently, this is being donated to SciPy by renci, the Renaissance Computing Institute at UNC Chapel Hill, for which we are very grateful ↩

-

These are archived for us by Zenodo (for which we are also very grateful), in a curated group called SciPy, which you can view here ↩